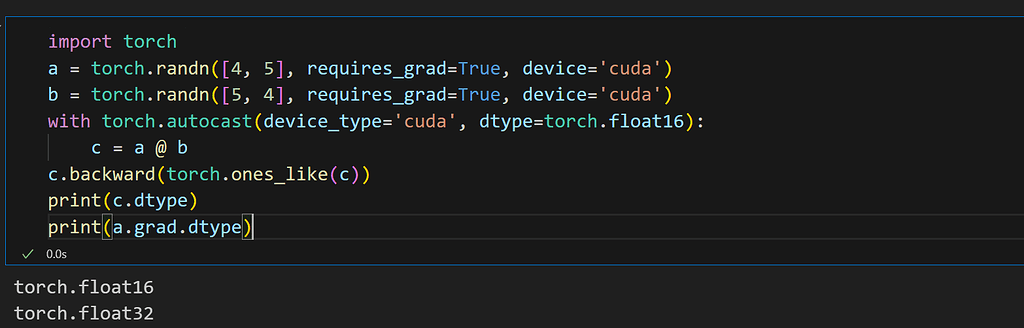

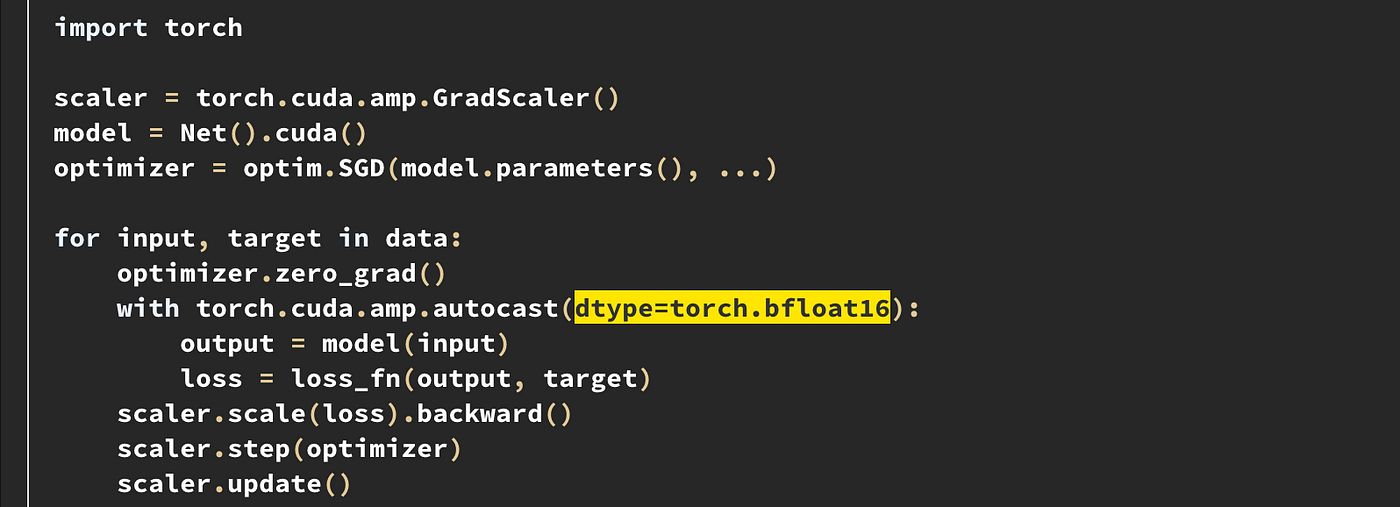

PyTorch on X: "For torch <= 1.9.1, AMP was limited to CUDA tensors using ` torch.cuda.amp. autocast()` v1.10 onwards, PyTorch has a generic API `torch. autocast()` that automatically casts * CUDA tensors to

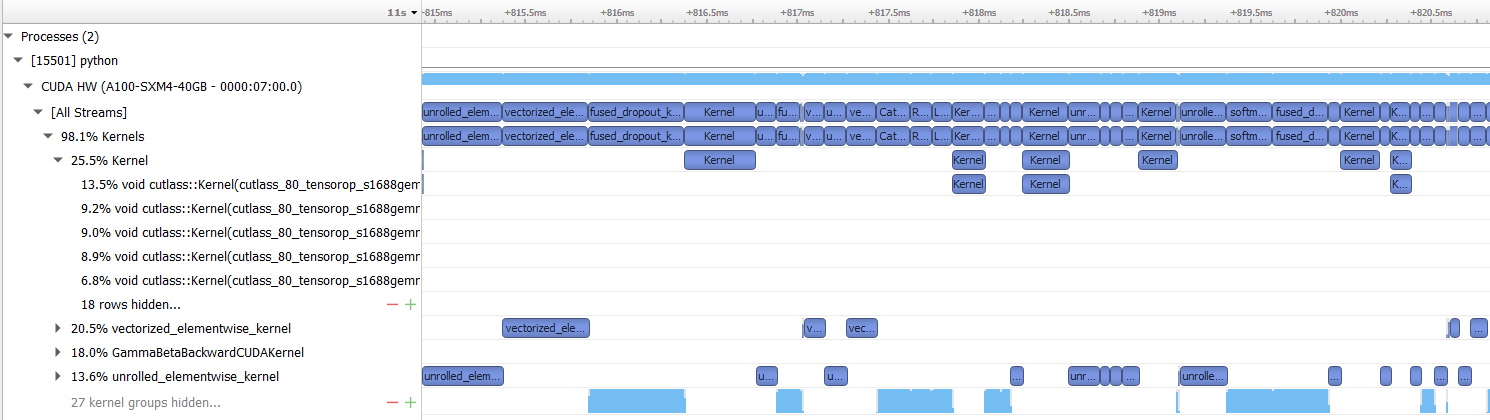

PyTorch on X: "Running Resnet101 on a Tesla T4 GPU shows AMP to be faster than explicit half-casting: 7/11 https://t.co/XsUIAhy6qU" / X

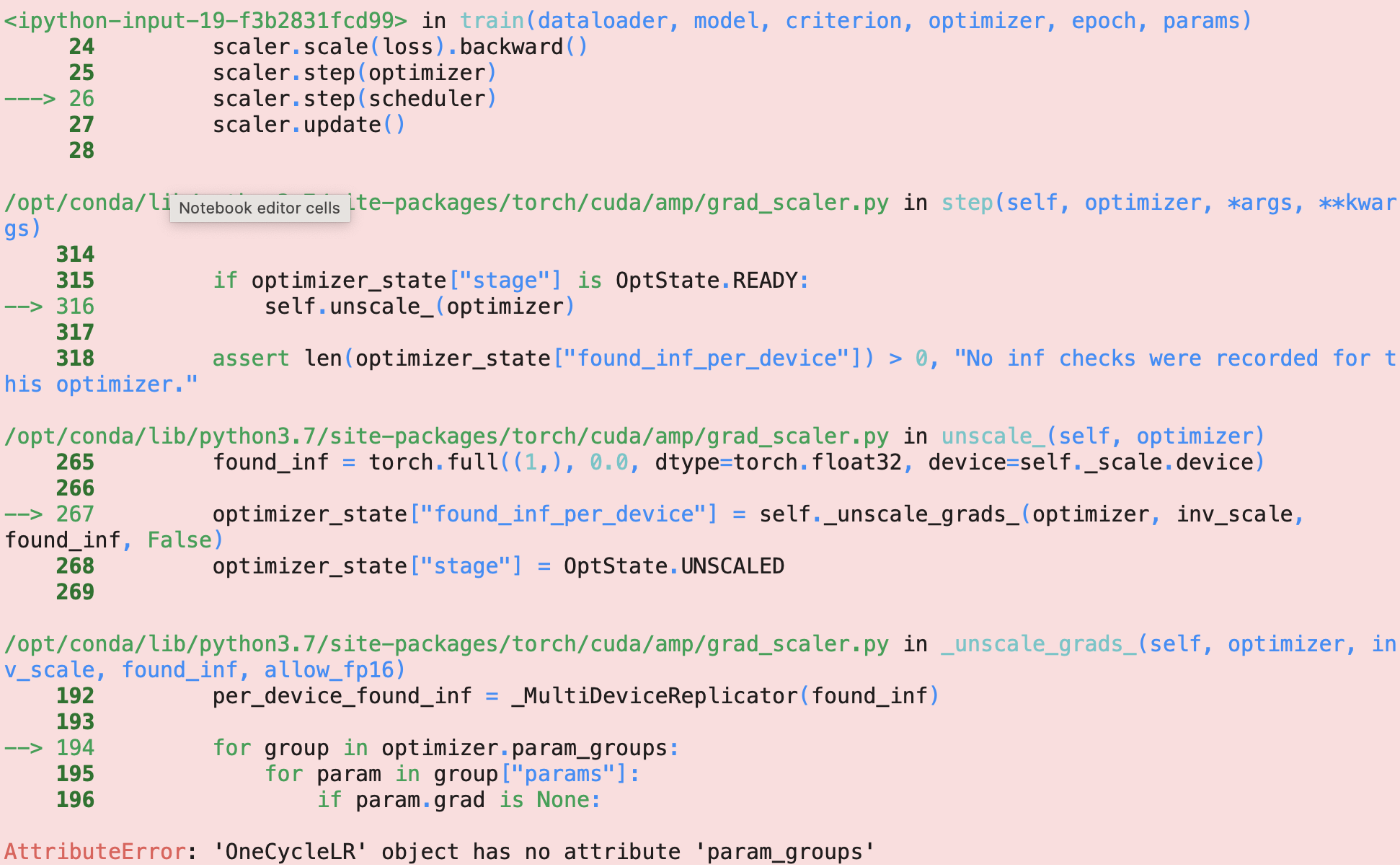

What is the correct way to use mixed-precision training with OneCycleLR - mixed-precision - PyTorch Forums

torch.cuda.amp, example with 20% memory increase compared to apex/amp · Issue #49653 · pytorch/pytorch · GitHub

torch.cuda.amp.autocast causes CPU Memory Leak during inference · Issue #2381 · facebookresearch/detectron2 · GitHub

AttributeError: module 'torch.cuda.amp' has no attribute 'autocast' · Issue #776 · ultralytics/yolov5 · GitHub

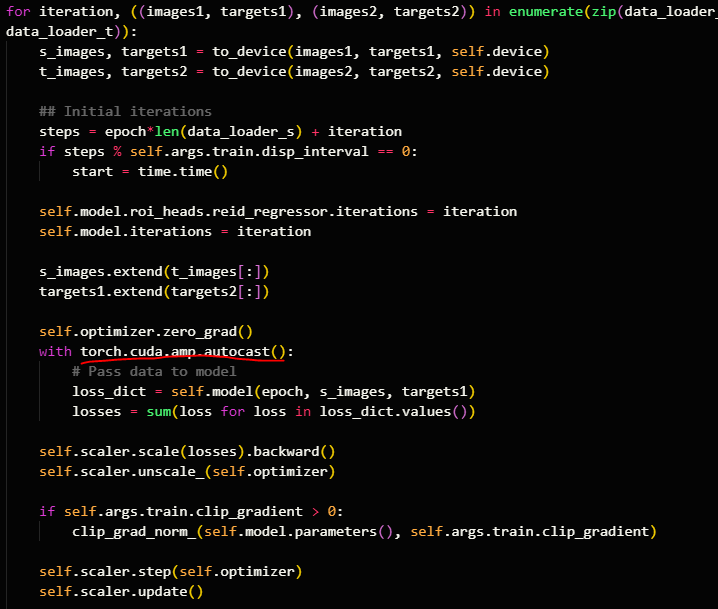

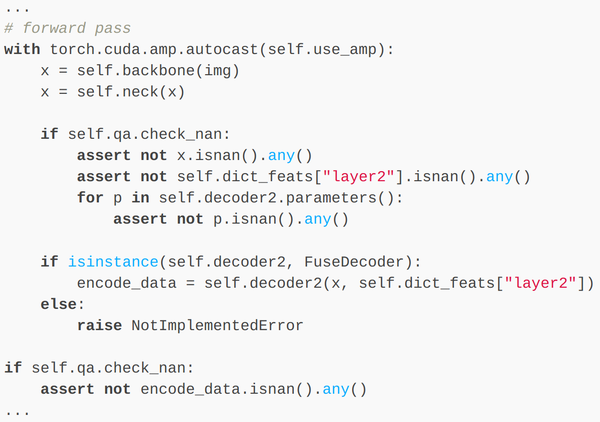

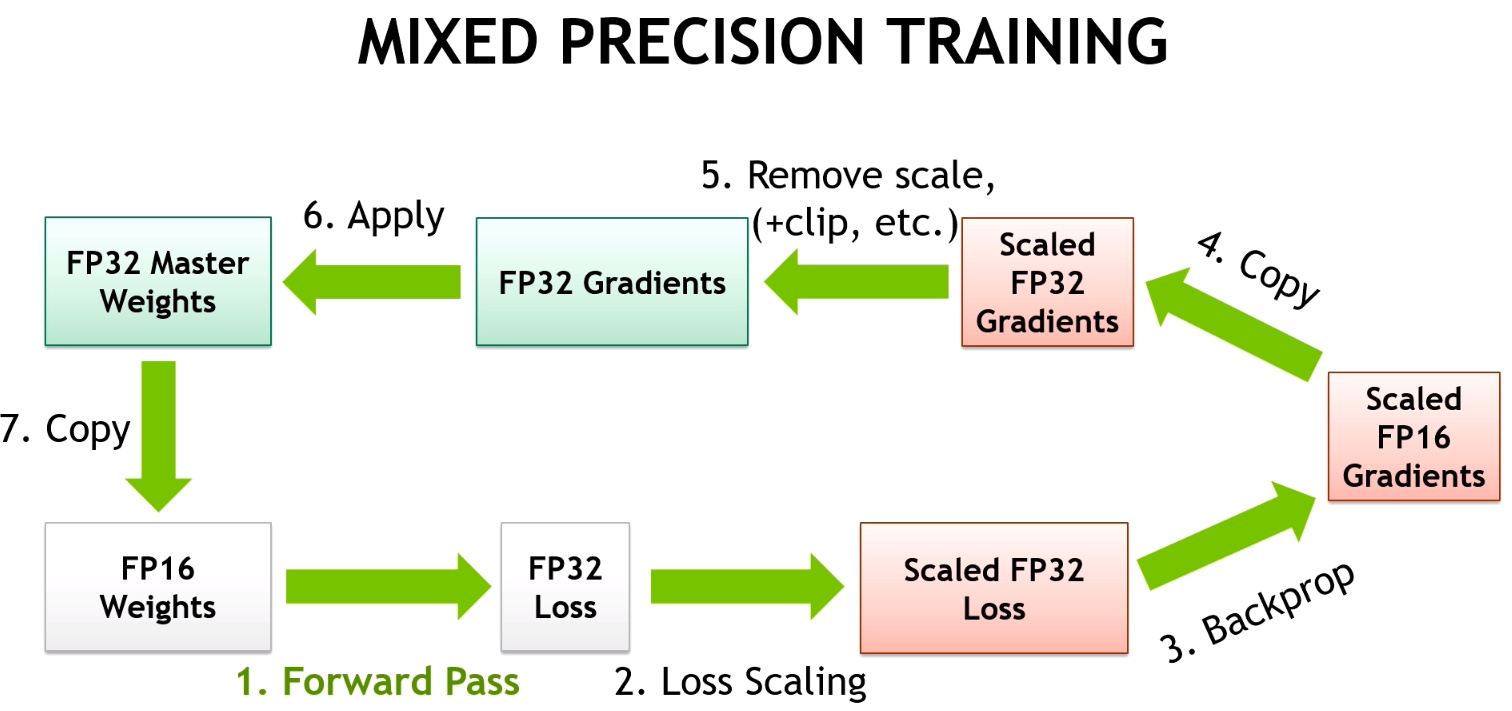

Rohan Paul on X: "📌 The `with torch.cuda.amp.autocast():` context manager in PyTorch plays a crucial role in mixed precision training 📌 Mixed precision training involves using both 32-bit (float32) and 16-bit (float16)